For the residents of Hawke’s Bay, February 2023 changed everything. When Cyclone Gabrielle tore across the North Island, it left a trail of destruction that reshaped the region’s relationship with its waterways. In the aftermath, the Heretaunga Hastings District Council (HDC) faced a critical mandate: rebuild not just infrastructure, but public trust.

For Mark Coetzee, Team Leader GIS and Information Intelligence alongside colleagues Darren de Klerk (Deputy Group Manager & Director Infrastructure Delivery), Emma Kay (Marketing and Engagement Advisor) and Kate Boersen (Illuminate Science), the path forward rested on a clear philosophy: simplicity, trust and speed.

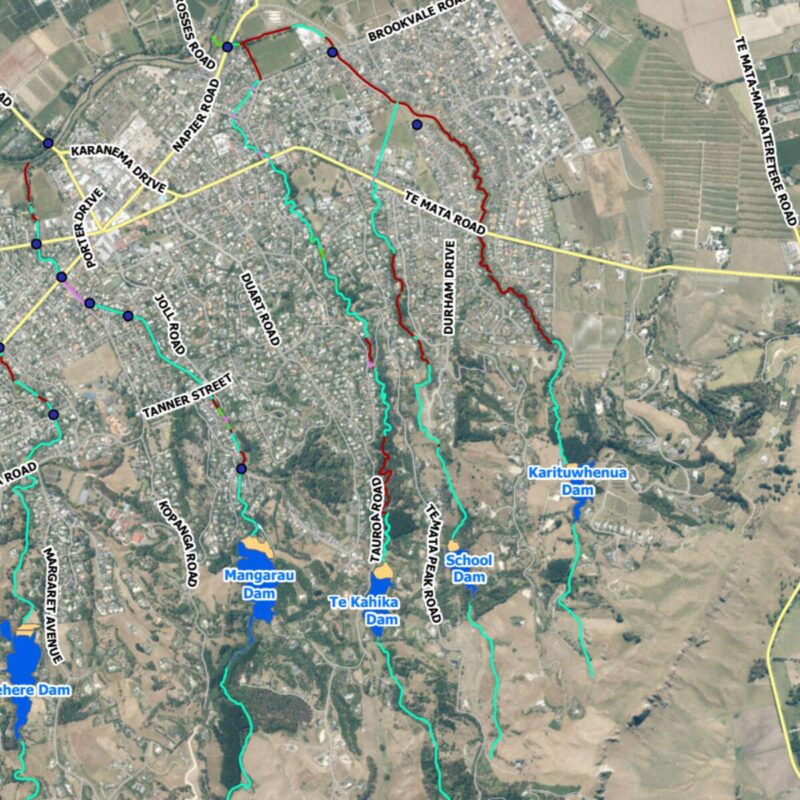

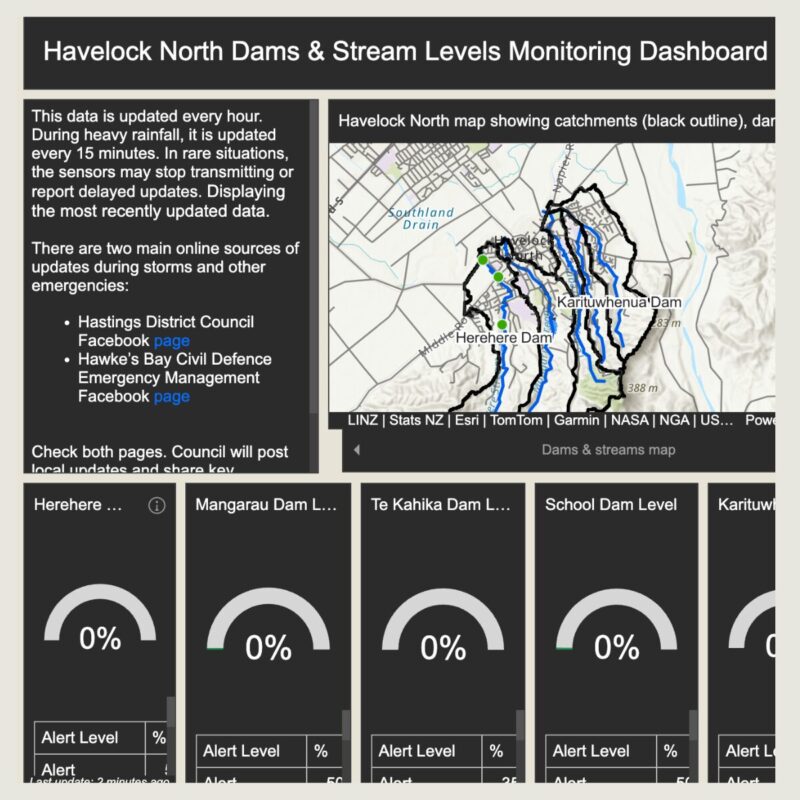

Protecting communities like Havelock North meant monitoring dam and stream levels in real time. Yet the data needed to do this lived in disconnected systems. Industrial SCADA telemetry, modern IoT sensors and global weather forecasting models all spoke entirely different digital languages. To Support the Havelock North Streams Management Strategy Project that data needed to be unified into a single, reliable view.

To bridge that divide between raw data and actionable insight, HDC turned to the integration power of FME.

Bringing Disparate Data Into a Single Voice

Understanding catchment health under pressure requires synthesising information from multiple systems at once. Dam telemetry, Adroit IoT sensor readings and ECMWF rainfall forecasts each offered valuable pieces of the puzzle but none could speak to each other directly. SCADA systems relied on industrial protocols, IoT devices output nested JSON, and weather models delivered complex API-driven data.

Without a unifying engine, staff were left toggling between screens, manually assembling a picture of risk during moments where minutes mattered. HDC needed more than data access; they needed translation, orchestration and confidence.

FME as the Logic Engine

FME became that translator. HDC built an automated workflow that reaches out to each source, SCADA, Adroit’s IoT API and external weather services using HTTPCaller transformers. FME handles authentication, ingests the returning JSON and extracts the forward-looking rainfall amounts needed for proactive planning.

But the real value lies in what happens after ingestion. With AttributeManager transformers and expression evaluators, the workflow calibrates raw sensor values against surveyed baselines to ensure readings on the dashboard reflect actual water depth rather than unprocessed integers. Conditional logic then assesses each value against HDC’s hazard thresholds, generating simple status categories like “Normal,” “Above Level 3” or “Above Level 4.”

In effect, FME becomes the interpreter between machine-level data and human-ready safety intelligence.

Delivering Confidence Through Automation

The move from manual checking to automated intelligence delivered immediate gains. Operators no longer need to monitor multiple systems; FME consolidates everything into a single, trusted feed, freeing up hours of staff time during weather events and reducing the risk of oversight.

Telemetry that once updated inconsistently now refreshes with purpose: standard data every 30 minutes, and critical dam readings accelerating to a 15-minute cycle during heavy rainfall.

While alerting is managed through a separate operational system, the FME workflow ensures that the data feeding those decisions is consistent, calibrated and trusted.

Because FME allowed the GIS team to rapidly prototype and deploy the system, HDC stood up a mission-critical dashboard in a fraction of the time custom development would have required, an essential win in restoring community confidence after the cyclone.

From Data Streams to Situational Awareness

Today, instead of piecing together scattered readings, operators open a live ArcGIS Enterprise Dashboard that acts as a central command centre. Real-time gauges and 24-hour trend graphs provide a clear narrative of dam and stream behaviour.

By automating the integration of SCADA, IoT, and weather data, HDC now has a system that embodies Mark’s philosophy of simplicity and speed, one designed to give the community clarity when the next storm arrives.

“The real win wasn’t just automating the feeds, it was standardising the logic. Once we used FME to calibrate values and apply hazard thresholds consistently, we removed a huge amount of uncertainty from the decision-making process.”

Mark Coetzee, Team Leader GIS & Information Intelligence

Technical Highlight: Under the FME Hood

For those who want a closer look behind the scenes, the HDC workflow is underpinned by a focused set of FME transformers. HTTPCaller connects to the Adroit IoT API and external weather services to fetch JSON payloads. JSONFragmenter breaks down the nested structures, especially the timeseries and precipitation objects, into usable attributes. AttributeManager applies the calibration logic such as subtracting surveyed baseline levels from raw sensor values, ensuring accuracy in every reading. Testers and conditional logic then evaluate each value against hazard thresholds to assign the appropriate status string displayed on the dashboard.